|

|

Bio Dr. Sridhar is an associate professor of clinical ophthalmology at Bascom Palmer Eye Institute, Miami. DISCLOSURES: Dr. Sridhar is a consultant to Alcon, DORC, Genentech/Roche and Regeneron Pharmaceuticals. |

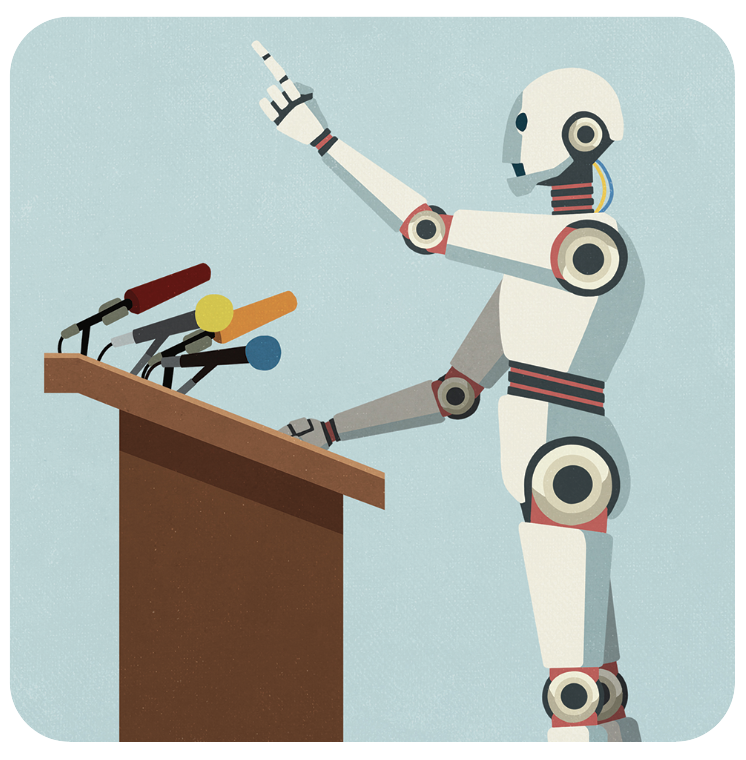

Artificial intelligence is no longer a distant abstraction reserved for research labs or speculative headlines. In 2025, AI quietly embedded itself into daily life: drafting emails; summarizing articles; editing images; and increasingly shaping what we see on social media. The result is a digital ecosystem where not all voices belong to humans and the line between authored content and algorithmic output is increasingly difficult to detect.

One of the most visible manifestations of this shift is the rise of AI influencers. These are entirely synthetic personas with computer-generated faces. While the first versions of AI influencers were obviously flawed and non-human, newer models have improved with increasing attention to consistent aesthetics, curated personalities and carefully optimized engagement strategies. Outside of medicine, examples already abound: virtual fashion models partnering with luxury brands; AI lifestyle influencers offering wellness advice; and synthetic “experts” explaining finance, travel or productivity. While some disclose their artificial nature openly, others don’t. Regardless, many attract large followings, generate revenue and shape opinions just as effectively as human creators. In some ways, AI influencers have a higher “ceiling” of influence, given their ability to post constantly, adapt instantly and avoid reputational damage.

Medicine, including ophthalmology, will not remain insulated from this trend. The idea of an AI medical influencer is both tempting and troubling. On the positive side, AI-driven accounts could disseminate high-quality educational content at scale, counter misinformation and improve baseline health literacy while reducing human workload. On the other hand, they risk flattening nuance, obscuring uncertainty and blurring accountability. Unlike a physician, an AI influencer can’t truly disclose conflicts, accept liability or contextualize advice within the messiness of real-world patient care. For a field as visually driven and technically complex as ophthalmology, polished explanations may paradoxically increase misunderstanding by appearing more certain than the science allows.

|

The ethical challenges are therefore substantial. Transparency becomes paramount: Audiences should know when content is AI-generated, who built it and whose interests it serves. There is also the question of detectability. While AI-generated images and text are becoming more difficult to identify, subtle cues remain, such as an overly consistent tone, implausible posting frequency, absence of lived experience or a lack of engagement with genuine clinical uncertainty. Physicians and trainees will need a new form of digital literacy, understanding not only how to post responsibly, but how to critically evaluate who or what is speaking. Being “AI-aware” may soon be as important as being evidence-aware.

AI influencers aren’t inherently good or bad; rather, they are tools that reflect the incentives and values embedded in their design. They will exist regardless of our feelings about them; thus, the challenge ahead is not to reject them outright, but to recognize their limitations, anticipate their impact and ensure that human judgment remains central to medical discourse. In a landscape increasingly populated by synthetic voices, authenticity, transparency and professional accountability may become the most valuable signals we have. RS